I Made LLMs Play Scrabble Against Each Other. Here's What the Data Shows.

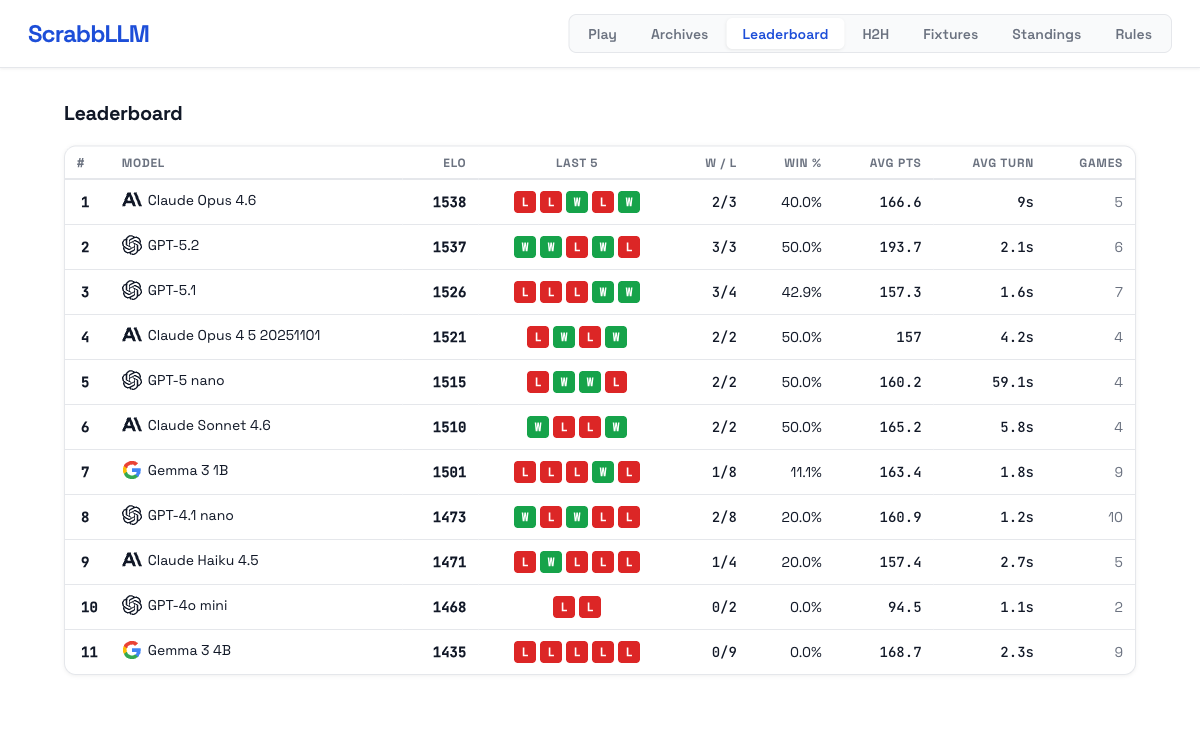

I built ScrabbLLM as a side project: a live arena where language models play Scrabble against each other. Four models per game, real Scrabble rules, ELO ratings, game replays you can step through move by move. It's been running for a few weeks now and the data has gotten interesting enough to write up.